Most people do not struggle because they lack ideas. They struggle because turning a rough musical idea into something listenable usually asks for too many separate skills at once. Melody, arrangement, vocal tone, pacing, style decisions, and production polish tend to arrive as one creative burden. That is part of why an AI Music Generator can feel useful in practice. It reduces the distance between intention and first output, which matters when the bigger problem is not inspiration but momentum.

That does not mean the process becomes automatic in a deep artistic sense. In my observation, tools like this are less about replacing judgment and more about making judgment usable earlier. Instead of waiting until the end to discover whether an idea has emotional weight, you can hear the direction sooner. For creators, marketers, hobbyists, and even people who simply want a fast draft, that changes the pace of decision-making.

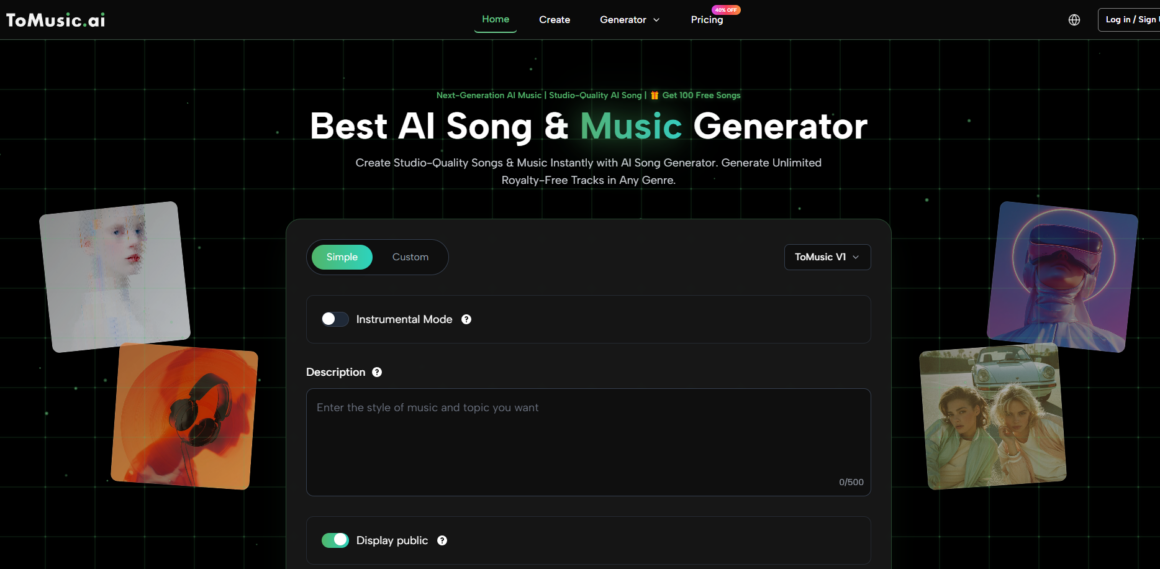

ToMusic is built around this faster loop. The official workflow is straightforward: choose a creation mode, set musical guidance, add lyrics if needed, and generate. The simplicity matters because many people approaching AI music are not looking for a new technical discipline. They want to test whether a phrase, mood, or concept can become a song without building an entire production environment first.

Why Faster Drafting Changes Musical Decision Making

Traditional music production often begins with commitment before feedback. You pick a chord path, shape a demo, or spend time on arrangement details before you know whether the song direction is emotionally convincing. That can be rewarding, but it also creates friction. A fast-generation platform changes the order. You can evaluate emotional direction earlier and save the more serious refinement for later.

Early audio feedback reduces vague creative uncertainty

A written idea like “warm cinematic pop with reflective female vocals” can sound specific on paper while still being musically uncertain. The gap between language and sound is where many ideas stall. A generated draft closes that gap quickly enough for you to react while the original impulse is still fresh.

Iteration becomes a creative filter, not failure

One useful shift is psychological. If a first version misses the tone, that result does not necessarily mean the idea was weak. It may simply mean the instruction was too broad, the mood tags were incomplete, or the lyric structure needed more control. In that sense, repeated generation is not always waste. It becomes part of selection.

How ToMusic Organizes The Creation Workflow

The platform’s design suggests that it wants to keep music generation approachable without removing all control. On the creation page, the official inputs include a model choice, Simple or Custom mode, an Instrumental option, fields for title and styles, category-style guidance such as genre, moods, voices, and tempos, plus a lyrics field for full songs.

Simple mode favors speed over detailed planning

Simple mode is the lower-friction path. It suits moments when you want to test a concept quickly instead of building a carefully specified song brief. For early ideation, that can be enough.

Custom mode supports more intentional song shaping

Custom mode is where the platform becomes more interesting. You can add title, style direction, and full lyrics, which creates a more deliberate bridge between idea and output. On the official page, the lyrics field and style controls make it clear that the system is not limited to generic background music.

Instrumental choice changes the role of the output

The Instrumental setting is important because it changes the use case. Sometimes the goal is a finished song with vocals. In other cases, a creator needs backing music for a video, a draft atmosphere for a campaign, or a non-vocal track for editing. This option keeps the platform relevant beyond lyric-based songwriting.

What The Official Controls Suggest About Creative Intent

One reason ToMusic feels more structured than a one-line novelty generator is that the interface separates musical intent into understandable layers. Title, styles, genre, mood, voice, tempo, and lyrics are not infinitely deep controls, but they are enough to encourage clearer thinking.

|

Aspect |

What the official page shows |

Why it matters in practice |

|

Creation mode |

Simple and Custom |

Supports both quick drafts and more directed songs |

|

Output type |

Vocal or Instrumental |

Lets users choose between song and background music |

|

Guidance fields |

Title, Styles, Genre, Moods, Voices, Tempos |

Helps shape the result beyond one vague prompt |

|

Lyrics support |

Dedicated lyrics field |

Makes lyric-driven generation a core use case |

|

Workspace |

My Music Studio |

Suggests an ongoing library rather than one-off generation |

This structure matters because better AI results often come from better framing, not just better models. A platform that nudges users to specify mood, style, and vocal direction is indirectly teaching them how to ask for more coherent music.

How Lyrics Become More Useful With Clear Structure

Many people assume lyric-based generation works best when lyrics are poetic and fully polished. In my testing of similar systems, that is not always true. What often helps more is structural clarity. The official ToMusic guidance mentions support for tags such as verse, chorus, bridge, intro, and outro, which points to an important idea: organization can matter as much as literary sophistication.

Section tags can improve song-level coherence

When lyrics are broken into recognizable sections, the model has stronger clues about repetition, energy shifts, and pacing. That does not guarantee a perfect song, but it often improves the odds that the result feels intentional rather than flattened.

Literal language can outperform over-decorated wording

One common mistake is writing lyrics that are so abstract they become difficult to perform convincingly. Clear emotional lines, memorable repetition, and section-level contrast usually travel better through a generative system than dense symbolic writing. That is not a limitation unique to AI. It is also true in many human-written pop structures.

A Practical Four-Step Music Drafting Method

The official workflow can be understood as a four-step process. Keeping it this simple is useful because it preserves speed without pretending the result will always be final on the first try.

Step 1. Choose mode, model, and output type

Start by selecting Simple or Custom mode, choose the available model, and decide whether the track should be instrumental or vocal-based. This first choice determines how much control you plan to exercise.

Step 2. Add style signals and lyrical direction

Enter the title, style notes, and any available guidance around genre, mood, voice, and tempo. If you want a full song, add lyrics in the dedicated field and organize sections where relevant.

Step 3. Generate and listen for directional accuracy

Generate the track and focus first on whether the emotional direction is right. At this stage, it is usually more helpful to ask “Is this the correct song identity?” than “Is every detail finished?”

Step 4. Save, compare, and refine inside your studio

Use the saved output in your music studio as a reference point for additional rounds. This is where Text to Music becomes more than a novelty. It becomes a repeatable drafting loop.

Where This Platform Fits Better Than Expected

A lot of people still frame AI music only as a shortcut for non-musicians. That interpretation is too narrow. The more realistic value appears when speed itself has strategic value.

Content teams can test sonic directions faster

A social team may need multiple tonal variations around one message: reflective, energetic, playful, cinematic. Generating early options helps them decide what fits the campaign before investing more editing time.

Independent creators can prototype identity sooner

Writers, video editors, or solo founders often know the feeling they want but not how to compose it. A structured platform lets them hear brand or narrative direction before they hire, buy, or build more.

Musicians can use outputs as reaction material

Even for musicians, the platform can function as a prompt engine for response. A generated song draft may reveal a stronger chorus concept, a better tempo idea, or a vocal mood worth developing in a more manual environment.

What To Keep In Mind Before Expecting Precision

Useful does not mean flawless. Music generation still depends heavily on prompt clarity and the relationship between style instructions and lyric content. A strong concept can produce a weak draft if the request is too crowded or contradictory.

Broad prompts often produce broader musical identities

If you ask for too many moods at once, the result may feel undecided. In practice, tighter requests usually lead to more convincing outputs than encyclopedic ones.

A first result is not always the best result

This is worth stating clearly because it improves trust. The platform accelerates creation, but it does not remove curation. Some songs will land quickly. Others will need multiple attempts before the direction feels stable enough to keep.

Revision is part of the real workflow

That is not a hidden weakness. It is part of how generative systems are actually used. You are shaping probability, not issuing a frame-by-frame command.

Why The Tool Matters More As A Draft Engine

The most interesting thing about ToMusic is not that it makes music from text. Many tools make that claim. The more meaningful point is that it organizes early music judgment into a workflow people can actually use. You choose a mode, set intent, generate, and compare. That sounds simple, but simplicity is often what turns experimentation into a habit.

For people who already hear songs in fragments, this kind of platform can reduce friction. For people who do not write music traditionally, it can make song drafting feel less inaccessible. And for teams working under time pressure, it can move the conversation from abstract descriptions to audible options surprisingly fast.

That, in my view, is the practical value. The platform is not important because it eliminates the need for taste. It is important because it lets taste enter the process earlier, when direction is still flexible and creative energy is easier to preserve.